Talk on Sufficiently Advanced Technology

It’s trite to say that technological literacy is a must-have skill for the 21st Century, but this is one of the things that really concerns me as a parent. I worry about my kids growing up surrounded by technology that they can’t hack, can’t fix, and basically operates as a black box. Glowing screens with big juicy icons that deliver the goods, right up until they don’t. And then what?

A gap is definitely emerging. One can observe a growing divide between people who have a general understanding of how computers, phones, and the internet work, are able to reason about this, make inferences and educated guesses, and those who sink below the event horizon where as Arthur C Clarke said, “any sufficiently advanced technology is indistinguishable from magic.”

This is dangerous, for a whole bunch of reasons.

First of all because without broad technical literacy this gap will deepen, harden, and there are good odds we’ll develop into a world of haves and have-nots. This is how you end up with the dystopian “HYPER REALITY” outcome:

Further, once you start assigning magical (or spiritual) value to the work of technology, you create a tremendous risk of obfuscating the human agent behind various decisions. People already have a tendency to believe or trust things a computer tells them, but algorithms are not “math” - they’re a series of particular human decisions on how to use particular bits of math to produce a particular outcome.

The algos are also inhuman, and can be deeply weird in practice. There’s real downside risk to me in creators shaping themselves to fit the software that tries to drive discovery. Content that’s SEO’ed to heck and back, or designed for maximum virality, is almost definitionally inferior. While there’s nothing really new about people pitching their stories for maximum exposure, and this leading to lower quality output — “if it bleeds, it leads” — it does undermine the putative value of the world wide web if we’re doing all this output caping for a piece of software and not the human audience.

Which finally brings us to Artificial Intelligence, which is all the rage these days and not without good reason. There are really cool advances being made, but the real progress is better understood to be in the field of Machine Learning specifically (not true “AI” so much) and in this field a major problem persists: the machines can only learn what we teach them. This is why every attempt to apply AI to law enforcement immediately dissolvs into textbook racial profiling. This would be darkly funny if it were just holding up a mirror to the face of our criminal justice system instead of potentially contributing to people being locked up.

It’s prejudice in / prejudice out, which is why I am inherently skeptical of any claims to breakthrough problem-solving as a part of these technologies. I believe they have tremendous potential to eventually handle a lot of mundane tasks and ideally help people spend more time on things that have greater meaning, but they’re not going to solve any problem we’ve not first ourselves mastered. Because the machines only know what we teach them, for better and sometimes for worse.

However, the fact that there’s a non-trivial, and in fact powerful and influential cohort of people who think sentient AI is likely or even possible represents, to me, the final big risk with loss of technical literacy: otherwise smart people (who more importantly other people take seriously) completely losing touch with reality. AI heads are out of pocket, frankly. You may not be able to pinpoint the moment when someone switches from being a Computer Scientist to a Computer Priest, when they trade analytics for augury, but it’s clearly happening.

Tech journalists are apparently quite bad at this too, somewhat ironically. The spate of stories that have come out where people “got scared” by the chatbot should be cause for deep professional shame for anyone who wrote one.

There’s no such thing as accountability these days, but one would hope that professional pride would at least cause some self-reflection among the credulous rubes who write stories about “what the computer is thinking.” But it’s magic to them, so how could they really know?

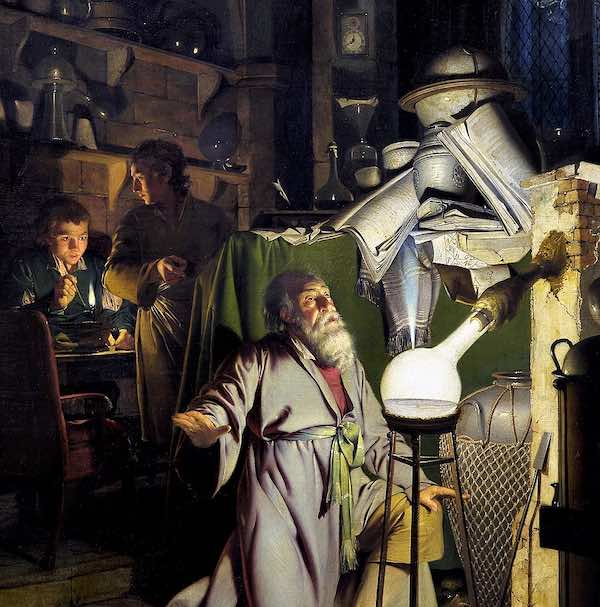

The Era of AI as Alchemy

I’ve been enjoying the Hell on Earth history podcast, and in their second bonus episode they got into the life and times of John Dee, an English alchemist of the era. I’m only vaguely familiar with this period of history, to be honest mostly from reading Neil Stephenson’s Baroque Cycle, which really focuses on the latter part of the era, the time of Issac Newton, when things finally began becoming more scientific.

But anyway, the parallels between Alchemy as a field and contemporary AI discourse were too striking not to note. You have people who are pursuing their project with white-hot missionary fervor, reaching out to touch the godhead, while at the same time also promising to literally manufacture wealth out of nothing. They’re fumbling through primitive forms of a science that is a century or more away from truly being born, trying to rationalize a world that is transforming itself tremendously as the old feudal forms give way to modern nation states and capitalism. Oh and there’s crazy disruption from climate change (this was during “the little ice age” - which wrecked everyone’s harvests for like 20 years), so you can see how the rhyme of history is hard to resist.

By the time you get to the era of Newton those changes in the political economy are pretty much done, though the disaggregation of mysticism and science was still a work in progress. Sir Issac himself was deeply into the occult along with his more famous exploits in materials science, calculus, and mechanics. He definitely had self-induced mercury poisoning as a result of his research attempts to find the Philosopher’s Stone.

But anyway, the story that really hit me from the Alchemical Era as an analogue for where we are with Artificial Intelligence was the accidental discovery of Phosphorus. This was done by a German dude named Hennig Brand, who was boiling down gallons and gallons of piss, more or less just to see what would happen, but ultimately again in pursuit of that dang Philosopher’s Stone. It seems like a perfect metaphor: the investment of resources in a noxious process with messianic zeal, shooting for completely impossible outcomes; but hey you know what we figured out some cool if random stuff along the way. Maybe we’ll figure out a use for that.

My perspective is that the trajectory for AI is more to change the way we work than to 1:1 replace current jobs with machines. Properly applied (not a given, but possible) AI can eliminate a lot of tedium and toil for mental tasks, which should be a good thing! However, it’s also almost certainly going to be abused and misused. There are already many stories of digital artists who are seeing their profession flattened, transformed, and de-skilled by generative image models. Mid-tier copywriters should definitely be nervous about their future prospects, and we’re definitely going to see some insane fake-news style content which has the potential to create widespread confusion and panic.

And so, returning to my previous post, this is why it’s so important not to take any shit from a machine. The way that technology plays out in shaping the course of our lives is not magic. It’s not spiritual destiny. It’s the result of predictable scientific capabilities, and slightly less predictable choices by people in positions of power and authority. All things we can master.

We need to be literate in all these things, to understand the principles behind the technology so we can be grounded, and also to understand the actors and structures around the decisions. Most importantly, beyond understanding these decisions, to the extent that they affect our lives we have a right to participate and have a say. We must not let technology become the new invisible hand.

| Attachment | Size |

|---|---|

| 51.77 KB | |

| 11.79 KB |